After submitting its proposal the team from ATR Lab @ Kent State University has been selected as one of the top 10 teams to participate in onsite NASA SUITS challenge at Johnson Space Center. The teams continues to develop its proposed AARON (Assistive Augmented Reality Operational and Navigation) System. The system combines the ongoing developed at the ATR Lab in the field of immersive telepresence and coactive / collaborative interaction models between synthetic and human agents. More information can be learned below.

NASA SUITS Challenge 2020

ATR Lab inTop 10 Teams

About NASA SUITS Challenge

NASA SUITS (Spacesuit User Interface Technologies for Students) challenges students to design and create spacesuit information displays within augmented reality (AR) environments. As NASA pursues Artemis – landing American astronauts on the Moon by 2024, the agency will accelerate investing in surface architecture and technology development. For exploration, it is essential that crewmembers on spacewalks are equipped with the appropriate human-autonomy enabling technologies necessary for the elevated demands of lunar surface exploration and extreme terrestrial access. The SUITS 2020 Challenges target key aspects of the Artemis mission.

Our Vision for the SUITS Challenge

Our proposed solution for the NASA SUITS challenge applies the technologies developed at the ATR Lab and our ongoing research on immersive telepresence robotics and control modalities that allow for coactivity between human and robotics agents. In particular, our proposal focuses on leveraging an astronaut’s biosignals to infer on the physical and cognitive state of an astronaut during an EVA, and thus allow our system, called AARON, to provide virtual assistance (synthetic-to-human-collaboration) or share anomalies with other crewmembers or parties involved during a lunar mission (human-to-human collaboration).

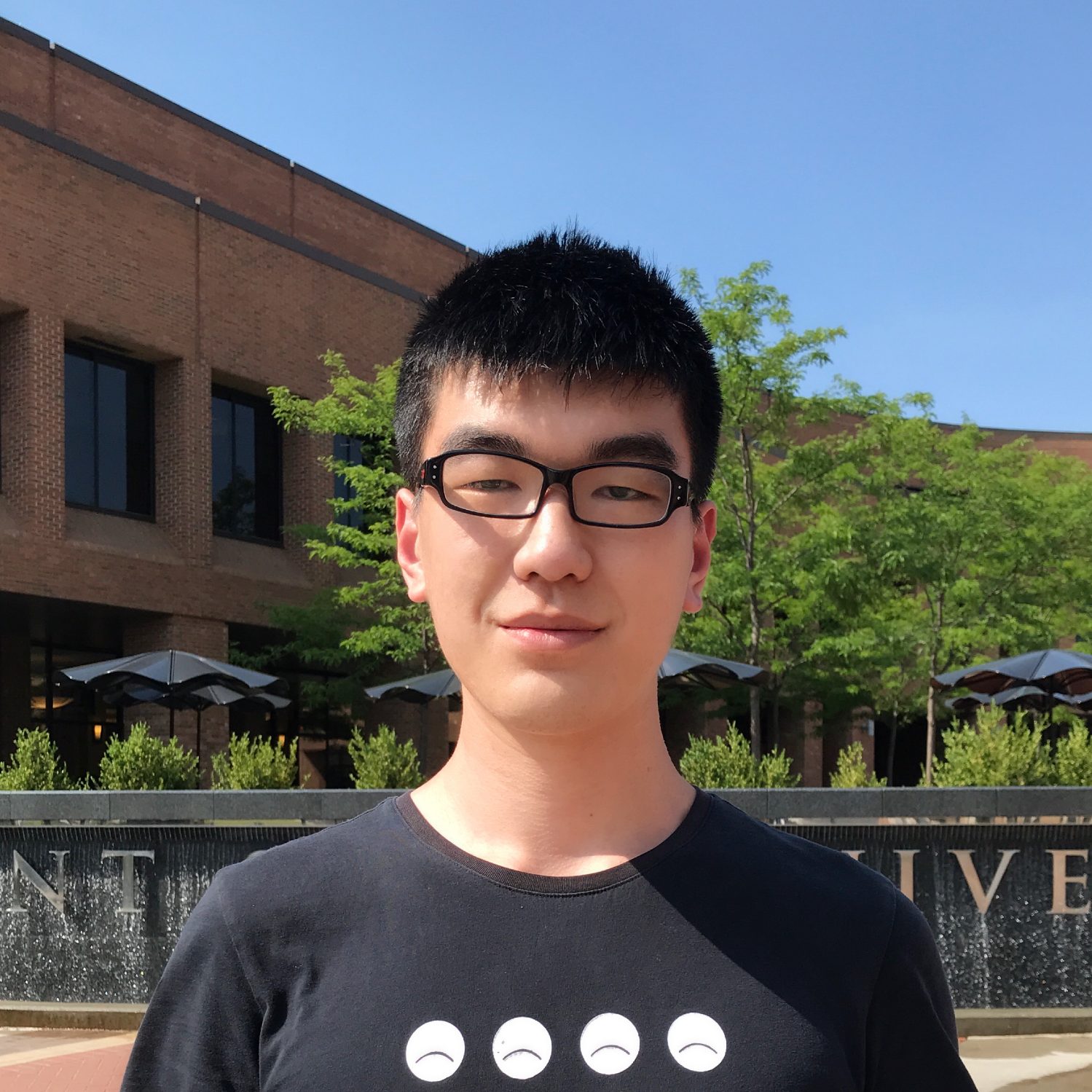

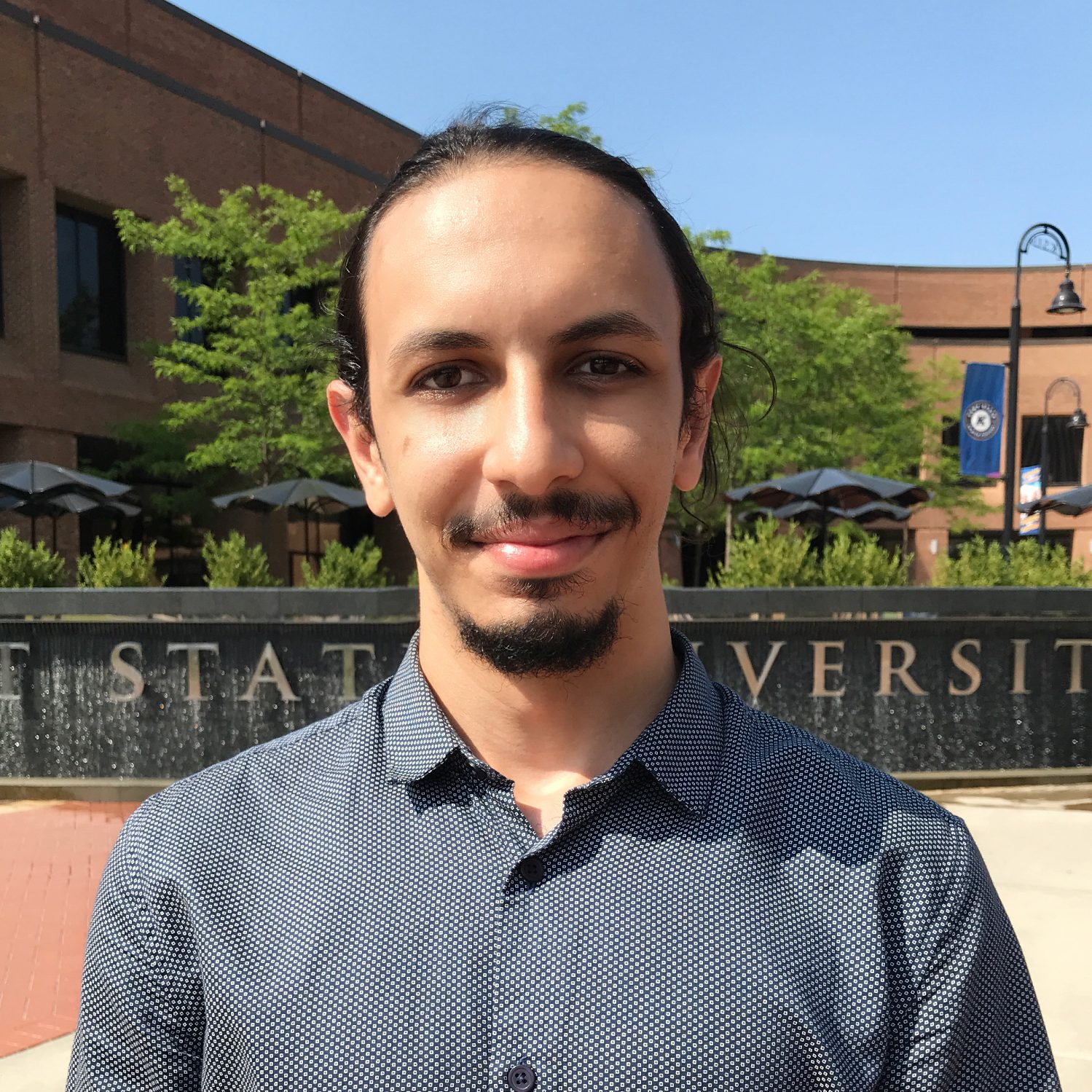

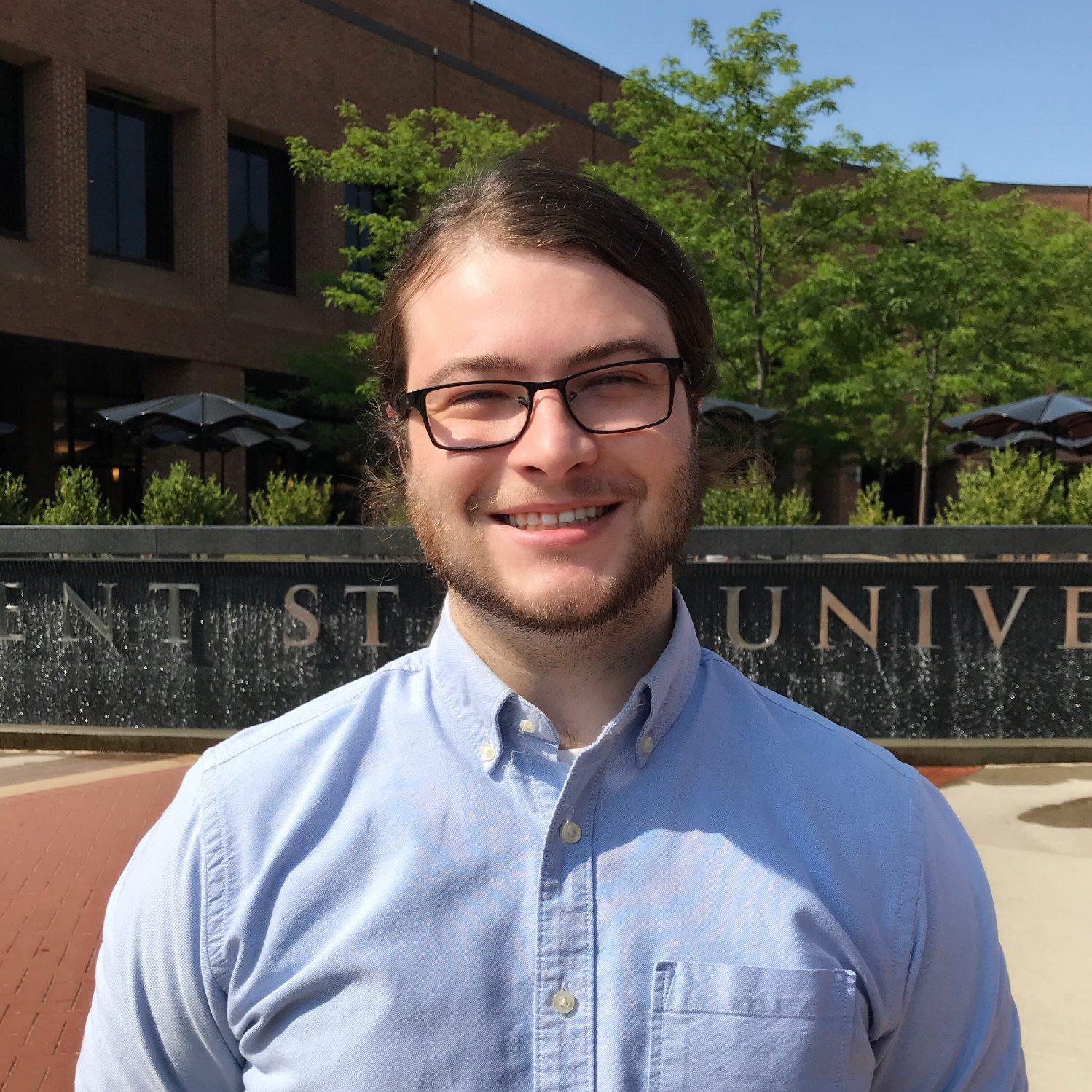

The Team

The team ATR Flux (ATR Lab @ Kent State) team is currently lead by Irvin Steve Cardenas, and is composed of a mix of graduate students and undergraduate students with diverse background (software engineering, mechatronics, fashion design, education). The team is under lead supervision of Dr. Jong-Hoon Kim (director of the ATR Lab) and Professor Margarita Benitez (director of the TechStyle Lab). A for a full list of all team members and advisors please see below.

Help the ATR Flux team by donating or following us on social media: @atrlab_kent

Our Vision: Context

Contrary to ISS EVA operations which involve a fleet of ground support personnel using custom console displays, and performing manual tasks such as taking handwritten notes to monitor suit/vehicle systems and to passively adjust EVA timeline elements; lunar exploration EVA is more physically demanding, more hazardous, and less structured than the well-rehearsed ISS EVAs. Most critically, the reactive approach of, ground-personnel, providing real-time solutions to issues or hazards (e.g. hardware configuration, incorrect procedure execution, life support system diagnosis) will not be feasible in the conditions of lunar EVA, i.e. limited communication bandwidth and latency between ground support and inflight crewmembers.

Our Vision: Approach

This proposal focuses on an Assistive Augmented Reality Operations and Navigation (AARON) system for NASA’s exploration extravehicular mobility unit (xEMU). The AARON system extends our research on immersive control interfaces for humanoid telepresence robots (Cardenas & Kim, 2019). As well as, our work on fabricating a telepresence control garment that monitors the operator’s physical state to infer on operational performance (Cardenas et al., 2019) – a model similar to the EVA Human Health and Performance Model discussed by (Abercromby et al., 2019). The core design tenets of our work on interfaces, and of the system presented in this proposal are: (1) allow for coactivity, (2) seamless collaboration and (3) non-obtrusive / minimal interference with performance. To elaborate, on coactive design (Johnson et al., 2014), the AARON system is designed under the premise that astronauts, and the overall mission, can benefit from interfaces that allow bi-directional collaboration and cooperation between humans and synthetic agents (e.g. voice assistants or robots). E.g., during the repair of a Rover, an AI agent could provide a set of graphical or textual instructions, but could also attempt to automatically visually recognize parts to enhance the repair experience.

Interaction Design

UI Flow and UX Design

Asset Design

2D and 3D asset design

AR/MR Software

Unity 3D development

Infrastructure & Services

Backend services and data architecture